Thank you! We have received your message and will be in touch with you shortly.

Extreme Processing Performance

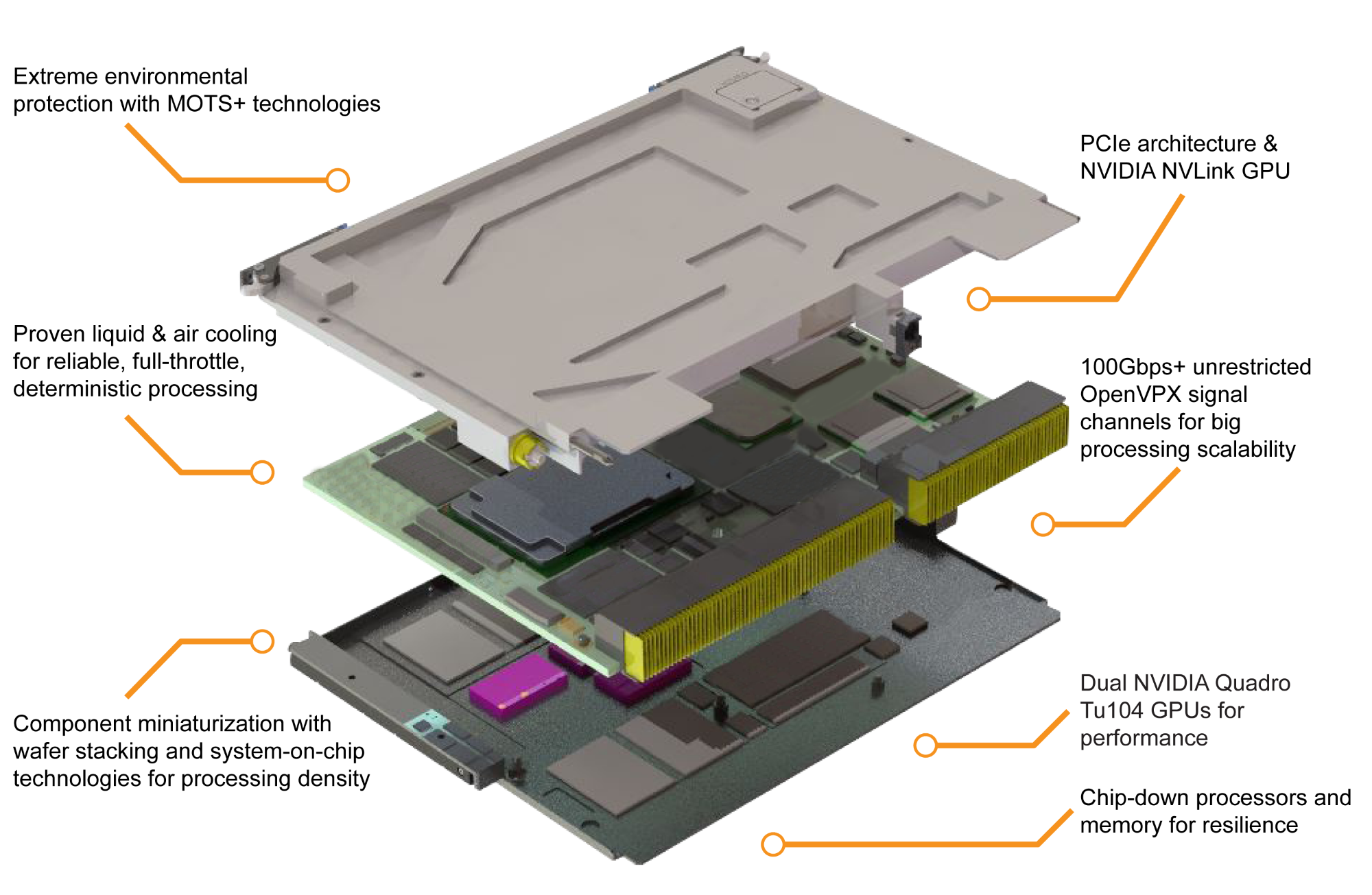

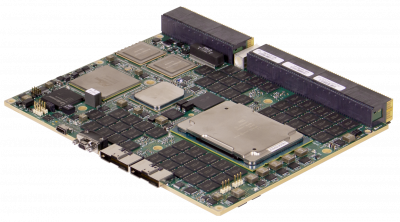

GSC6204 modules incorporate the NVIDIA Turing™ GPU architecture to bring the latest commercial processing advancements and scalability to the embedded domain. Powered by dual Quadro TU104 processors and incorporating NVIDIA’s NVLink high-speed direct GPU-to-GPU interconnect technology and a PCIe architecture, these modules embed the same massive parallel processing capability found in contemporary data centers into your edge application. For enhanced performance, each GPU features NVIDIA’s Tensor Cores for mixed-precision matrix multiply and accumulate calculations in single operations, which are a key component of many AI, deep learning, and signal processing and fusion workloads.

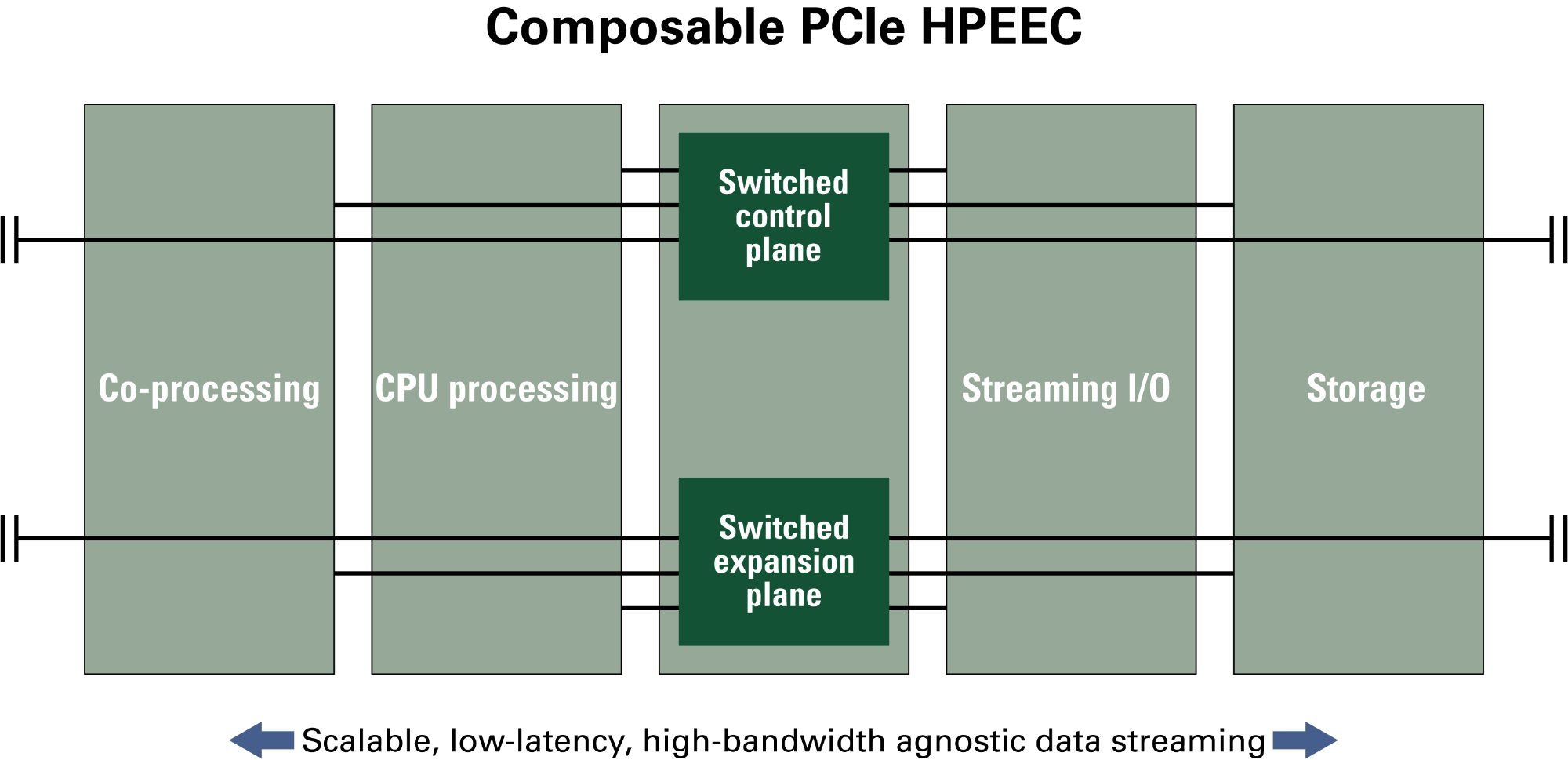

Embedded Composable Data Center

GSC6204 GPU coprocessing engines are a critical component of a truly composable high-performance embedded edge compute (HPEEC) environment with processing power unmatched by competing approaches. Combined with Mercury’s HDS6605 Intel® Xeon® scalable server blades, SCM6010 fast storage, SFM6126 wideband PCIe switches and streaming IOM-400 I/O modules forms a truly composable high-performance embedded edge compute environment. This approach leverages the same tools, architecture and software used in the data center, enabling your application to scale from the cloud to the edge with ease.

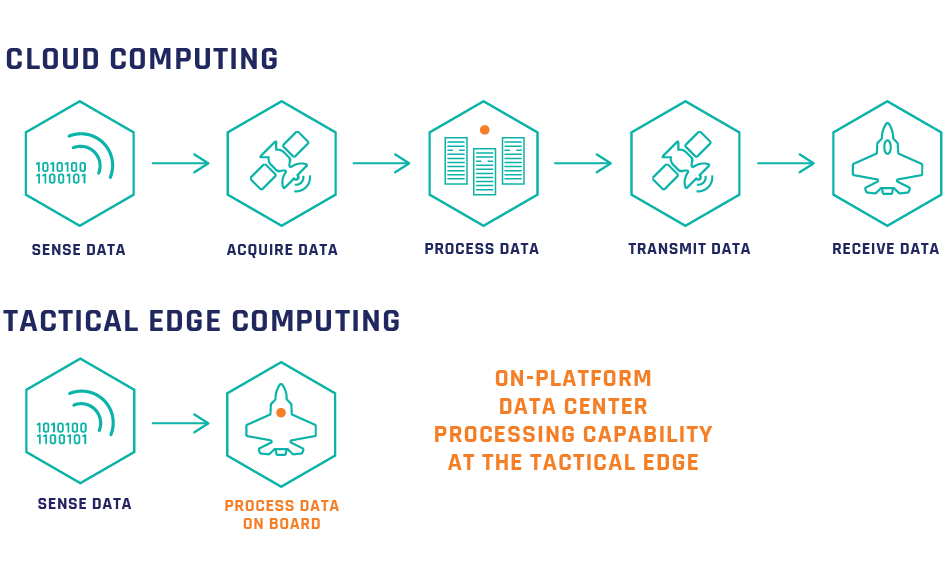

Deploy Smarter, More Autonomous Platforms

Place compute-intense sensor processing solutions that require data center capabilities closer to the data source – at the edge. Efficiently process streaming data over a distributed, heterogeneous PCIe-enabled and scalable common data center architecture. Drive affordability and program velocity while; lowering your program risk by using an architecture that evolves at the speed of technology, follows industry technology roadmaps and leverages data center hardware, software and IP.

FASTER DECISION-MAKING

Accelerate AI Anywhere

We bring the latest in processing technology directly to the sensor, so applications such as cognitive EW can use AI to identify new patterns in detected data and develop an appropriate response nearly instantaneously.

RELATED PRODUCTS

OpenVPX Embedded Processing

Pre-integrated, SWaP-optimized, customizable OpenVPX multifunction subsystems with program support services, security.

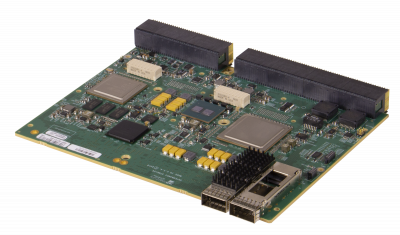

HDS6605 6U OpenVPX Multiprocessing Board

Intel Xeon Scalable 22-core server-class CPU, 192 GB DDR4, 100 GbE, PCIe Gen3, MOTS+ protection

SFM6126 6U OpenVPX Network Switch Board

20x PCIe Gen3, 2x PCIe, 16x 10GbE, 2x XMC I/O, 12V, front I/O, IPMC sys. mgmt, extreme environmental protection

SCM6010 & CAN6000 6U OpenVPX SSD Storage

Up to 24 TB storage, removable canister, 48x PCIe Gen3 switching, dual IPMB, MOTS+ protection