GPU Processing at the Edge

Uncompromised data center processing capability deployable anywhere

Evolving compute-intensive AI, SIGINT, autonomous vehicle, Electronic Warfare (EW), radar and sensor fusion applications require data center-class processing capabilities closer to the source of data origin – at the edge. This has driven the need for HPC to evolve into high performance embedded edge computing (HPEEC). Delivering HPEEC capabilities presents challenges as every application has its own survivability, processing, footprint, and security requirements. To address this need, we partner with technology leaders, including NVIDIA, to align technology roadmaps and deliver cutting-edge computing in scalable, field-deployable form-factors that are fully configurable to each unique mission.

What it delivers: HPEEC leverages the latest data center processing and co-processing technologies to accelerate the most demanding workloads in the harshest and most contested environments. Customer benefits include:

· The ability to scale compute applications from the cloud to the edge with rugged embedded subsystems that adhere to open standards and integrate the latest commercial technologies.

· Maximized throughput with contemporary NVIDIA® graphics processing units (GPUs), Intel® Xeon® Scalable server-class processors, contemporary field-programmable gate array (FPGA) accelerators, and high-speed, low-latency networking.

· Advanced embedded security options that deliver trusted performance and safeguard critical data.

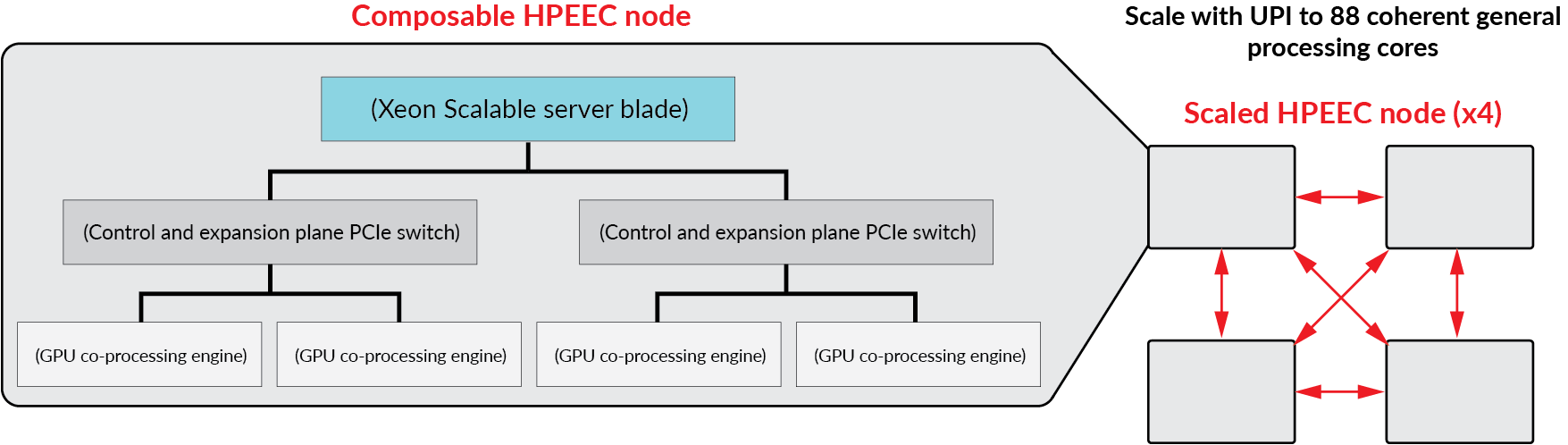

Scaling

We work closely with technology leaders to deliver a composable data center architecture that can be deployed anywhere. As a Preferred Member of the NVIDIA OEM Partner Program our engineering teams leverage their collective capabilities to embed and make secure the latest GPU co-processing resources for defense and aerospace applications. Packaged as rugged OpenVPX modules, these system building blocks are a critical HPEEC scaling element. For even greater interoperability and scalability, these GPU co-processing engines are aligned with the Sensor Open System Architecture (SOSA). In this age of smarter everything, SOSA seeks to place the best technology in the hands of service men and women quicker.

Maximized throughput

Delivering uncompromised data center performance at the edge requires environmental protection. Our proven fifth generation of advanced packaging, cooling and advanced interconnects protect electronics from the harshest environments, keeps them cool for long reliable service lives and enables the fastest switch fabric performance in any environment. The ability to work closely with technology leaders like Intel enables us to package the most general processing capability with hardware enabled AI accelerators as miniaturized OpenVPX blades that form another pillar of a truly composable HPEEC solution (fig 1).

Security

Security has always been important and today it is critical. The closer processing goes to the edge, the more critical this requirement becomes. Proven across tens of defense programs, our embedded BuiltSECURETM technologies counter nation-state reverse engineering with systems security engineering (SSE). BuiltSECURE technology is extensible to deliver system-wide security that evolves over time, building in future proofing. As countermeasures are developed to offset emerging threats, the BuiltSECURE framework keeps pace, maintaining system-wide integrity.

What’s next?

We will soon be announcing an expansion to our portfolio of NVIDIA-powered OpenVPX co-processor engines with the introduction of dual Quadro TU-104 GPU powered configurations. These rugged co-processing engines will feature greater BuiltSECURE capabilities making them exportable as well as enabling them to be deployed anywhere. These options will have NVIDIA’s new NVLinkTM high-speed GPU-to-GPU bus fully implemented to deliver uncompromised data center capability at the edge.

To learn more visit GTC and see Devon Yablonski present “GPU processing at the edge” live - #GTC19

Digital transformation with MBSE is the key to rapid innovation

Digital transformation with MBSE is the key to rapid innovation Quarterly new product announcements

Quarterly new product announcements